Today, the OpenStack community is proud to announce the release of OpenStack 2026.1 Gazpacho, the 33rd release of the world’s most widely deployed open source cloud infrastructure software. But beyond the features and improvements, Gazpacho tells a deeper story: one of a global community continuing to come together to solve real infrastructure challenges through open collaboration.

Every OpenStack release is the result of thousands of contributions, countless hours of collaboration, and a shared commitment to building open infrastructure that works in production. Gazpacho is no exception.

A Community Driving Momentum

The Gazpacho cycle reflects growing momentum across the OpenStack ecosystem. Over the past six months, around 500 contributors from 100 organizations worked together to deliver more than 9,000 code changes across OpenStack services, an increase in activity compared to the recent OpenStack 2025.1 Flamingo release.

Behind those numbers are developers, operators, users, and organizations from across the globe collaborating in the open to build software that powers private clouds, edge environments, and increasingly, AI infrastructure.

The scale of collaboration doesn’t stop at code. OpenDev’s Zuul CI system continues to run more than one million jobs per release cycle, ensuring that every contribution is tested, validated, and production-ready before it reaches users.

This level of rigor and transparency is what makes OpenStack unique. It’s not just about shipping features, it’s about building trust.

Open Collaboration, Global Impact

One of the most powerful aspects of the OpenStack community is its global nature. Contributors span hundreds of organizations and nearly every region of the world, bringing diverse perspectives and real-world requirements into the project.

In the Gazpacho release, 40% of contributions came from European contributors highlighting how regional priorities like digital sovereignty are shaping the future of open infrastructure.

This is the strength of open source: the ability to reflect the needs of different industries, geographies, and use cases, all within a shared codebase. Whether it’s telecom operators optimizing for performance, enterprises migrating off proprietary platforms, or governments building sovereign cloud infrastructure, those needs show up directly in the software.

Solving Operator Challenges Together

At its core, OpenStack has always been driven by operators and Gazpacho continues that tradition.

This release focuses heavily on simplifying operations, improving workload mobility, and enabling infrastructure to run consistently across increasingly complex environments. These priorities don’t emerge in isolation; they come directly from community feedback, real-world deployments, and ongoing collaboration between users and contributors.

For example, enhancements in Ironic introduce more intelligent automation, reducing the need for manual configuration and making it easier to manage bare metal infrastructure at scale. Features like autodetect deployment interfaces and automatic protocol detection reflect a broader goal: letting operators focus less on configuration and more on outcomes.

Similarly, improvements in workload migration, such as enhanced cross-zone strategies and live migration support for sensitive workloads, address one of the most urgent challenges facing infrastructure teams today. As organizations accelerate migration from proprietary platforms, seamless mobility across environments is no longer optional; it’s essential.

Performance and Flexibility for Modern Workloads

The Gazpacho release also brings significant improvements in performance and responsiveness, shaped by the needs of operators running at scale.

Enhancements like parallel live migrations, asynchronous APIs, and better I/O handling in Nova are direct responses to real-world demands for faster, more efficient infrastructure. At the same time, expanded hardware support, from GPUs to FPGAs and beyond, ensures OpenStack can support the growing diversity of modern workloads, including AI and data-intensive applications.

These improvements are not built in isolation. They are the result of collaboration across projects—Nova, Neutron, Ironic, Manila, Cyborg—and across contributors who understand that infrastructure is a system, not a set of silos.

A Platform That Evolves with Its Community

Thirty-three releases in, OpenStack continues to evolve, not by chasing trends, but by responding to the needs of the operators running the software in production. As of October 2025, the global OpenStack footprint exceeds 55 million compute cores.

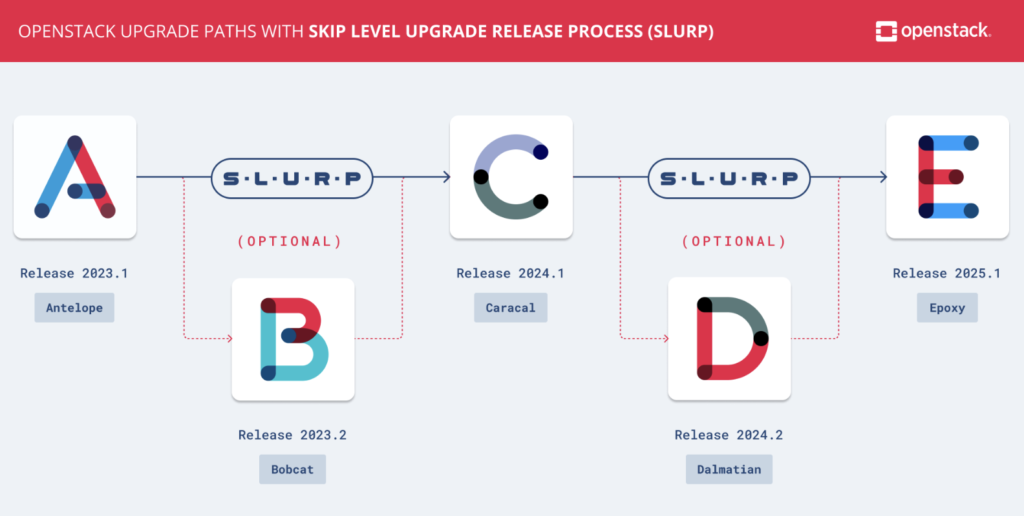

The introduction of the SLURP (Skip Level Upgrade Release Process) model is a perfect example. Designed with operators in mind, SLURP allows for annual upgrades instead of every six months, reducing operational burden while maintaining a predictable release cadence.

It’s a reminder that innovation in OpenStack isn’t just about features; it’s also about how the software is delivered, maintained, and operated over time.

Looking Ahead

Gazpacho represents more than a release. It’s a snapshot of a community in motion, growing, adapting, and continuing to build infrastructure that meets the needs of today while preparing for what comes next.

From enabling workload migration at scale to supporting AI-driven infrastructure and advancing digital sovereignty, the direction of OpenStack is clear: open collaboration remains the foundation.

And that foundation is only as strong as the people behind it.

To everyone who contributed to the Gazpacho release—code, documentation, testing, feedback, and beyond—thank you. This is your release.