Data volume is growing at an unprecedented rate, often without consummate growth in computation needs. The world that lead to the emergence of the tenets laid out in the GFS and Map Reduce papers has seen a dramatic transformation, with over a decade of hardware improvements. Disaggregation of compute and storage is now commonplace, championed by organizations like Netflix, Amazon, and Airbnb. Pushing storage down a layer and treating it as an infrastructure service allows data platform teams to focus on increasing the pace of innovation higher up the stack.

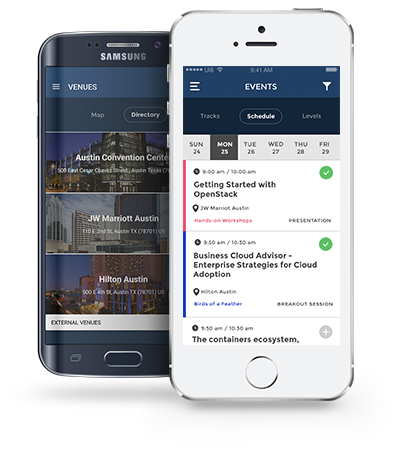

Engineers building data platforms on OpenStack clouds expect a varity of storage services. Object storage is increasingly becoming the centerpiece of data platforms, as it enables in-situ analysis from elastic workload clusters, low cost, and massive scalability. The presentation will detail object storage centric data platform architectures, and how to build rock solid storage services suitable for data intensive applications.

Learn

- How data platform architectures use object storage to provide nimbler, more elastic workload clusters.

- The anatomy of a object storage service geared to cater to data intensive applications.

- What hardware leads is needed for instances desired by data platform engineers.

- How to configure and use Cinder volume types to provide additional capacity when necessary.