ML systems are composed of complex combinations of algorithmic solutions applied to process large amounts of data, which requires time and computation resources. Implementations of such systems often rely on various external dependencies. Thereby evaluating such systems may end up being a real challenge.

Containers are efficient means to package such systems but do not carry with them hardware passthroughs for programs configured for a specific hardware; Virtual Machines (VM) offer a better way to guarantee this replicability.

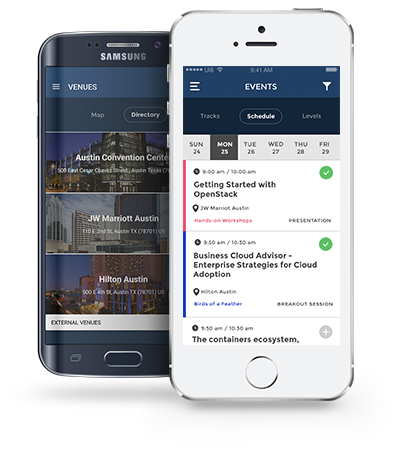

In our work, an entrypoint has been designed to allow the execution of the VMs regardless of their implementation. The interfaced VMs have then been coupled with a batch processing orchestrator, that schedules their execution in an OpenStack project.

As a result, we are able to schedule the execution of any pair of system/dataset over a cluster and therefore optimize the allocation of resources. This setup has been successfully used during a NIST evaluation.

The Multimodal Information Group, which is part of the National Institute of Standards and Technology, often performs advanced evaluations and benchmarking of the performances of computer programs.

Evaluations are performed on complex systems that often rely on various external dependencies. These systems may have to be executed multiple times on various data sets,which requires a lot of computation resources. Also, in this case, programs have been configured on a specific node, with a specific set of hardware components.

The planning of such evaluations raise a lot of different questions, the following ones will be covered through a use case:

- How to standardize the distribution format of the systems to guarantee their replicability and reusability?

- How to design an entrypoint to allow multiple executions of the same system?

- How to efficiently schedule these different executions on a cluster?

This session may be useful for anyone who would like to know about the distribution of hardware-specific machine learning systems, as well as automating batch processing on an Openstack cluster.